Published on May 16, 2022

This article was originally published on the NCSA website.

When the internet launched in the last century it was an amazing feat of science and technology. But it was not designed for the massive data sets, machine-learning tools, advanced sensors and Internet of Things devices that have become central to many research and business endeavors and our homes.

Funded with a $20 million grant from the National Science Foundation, FABRIC (Adaptive Programmable Research Infrastructure for Computer Science and Science Applications) is exploring ways to replace an internet infrastructure that’s been showing its age for the last 20 years.

NCSA is one of 13 collaborating institutions helping create a platform for testing novel internet architectures that could enable a faster, more secure internet better suited for today’s users and future needs. One that’s also able to do things not possible now. The FABRIC project is led by the Network Research and Infrastructure Group at RENCI at the University of North Carolina at Chapel Hill.

NCSA: First with the Gigs

Last fall, NCSA installed a 100-gigabit network connection that’s dedicated solely to the FABRIC project, the first FABRIC collaborator to do so.

NCSA already had six 100-gigabit internet connections, says David Wheeler, leader of NCSA’s ICI Data Management and Delivery Division and the principal investigator for NCSA’s FABRIC node. The FABRIC connection, however, is used solely for research on the FABRIC objectives and is connected to FABRIC’s 400-gigabit backbone.

Wheeler says the NCSA networking team is supporting the FABRIC design in the NCSA environments, hosting a FABRIC hank (network node) and an experimenter-facing storage system.

NCSA network engineer Corey Eichelberger installed the rack, coordinated the connections and ensured the FABRIC equipment is isolated from NCSA’s other network nodes. The isolation is partly for security but mostly to allow full accessibility of the testbed. Matt Kollross, network engineering team lead, and network engineers Eric Boyer and Michael Douglas are also contributing expertise.

People don’t often think much about a network. Networking is a given from a lot of people’s perspectives. But I think it is the fascinating underpinnings of what we do. Science relies on the internet more and more. That need will just continue to grow. And the internet we have now won’t be able to keep up.

David Wheeler, NCSA ICI Data Management and Delivery Division Lead

He notes it’s the work of network engineers that makes it possible for people to have and use the internet, whether for personal or business use or scientific research.

Research Ramping Up

This is year three of the four-year FABRIC project and is the point “where it’s really getting exciting,” says Wheeler and NCSA faculty affiliate Anita Nikolich, a research scientist and director of research innovation at the iSchool at the University of Illinois Urbana Champaign and a co-PI on the FABRIC project.

This is the phase where collaborators can experiment and test. It’s a networking laboratory. Although NCSA’s grant funding and investment in the project is small, about $170,000, being able to offer networking insight, expertise and a safe environment for researchers to be creative and try new things is invaluable.

“FABRIC’s intent,” says Nikolich, “is a place that people can [try new things] and experiment to build the new internet, one that’s secure, censorship-resistant and supportive of big science. More than just a big network, one that really supports science.”

What NCSA is Researching

While FABRIC focuses on the United States, science is conducted globally. So, Nikolich is the principal investigator on a supplemental grant to focus internationally called FABRIC Across Borders. FAB is an extension of the FABRIC testbed connecting the core North America infrastructure to four nodes in Asia, Europe, and eventually South America, creating the networks needed for science to move vast amounts of data across oceans and time zones seamlessly and securely.

A key FAB collaborator, says Nikolich, is the NCSA team working on Rubin Observatory’s Legacy Survey of Space and Time.

“Basically, FABRIC is going to serve as a testbed to try out how to get the data streams in the hands of scientists more quickly. And once it’s proven out on FABRIC, LSST ideally will include it in their science workflow,” says Nikolich.

NCSA’s LSST team is experimenting with tweaks to the software used to transmit data to the data brokers. The focus is on optimizing the alert streams so when the telescope becomes operational in Chile later this year, scientists will receive alerts faster than they do now.

As FAB currently has no nodes in South America, the team emulates what a network connection would be. They begin by taking a long-lasting slice of Docker and emulating the processing to get the test alert streams running. (Docker is a type of container, an app that packages code and dependencies together so software will always run the same, regardless of the infrastructure.) The next step will be to emulate going from Chile to the FABRIC node here.

“We want to make sure that they’re testing how to do this particular networking angle,” explains Nikolich, “so that when real-life networking is installed during the next two years, they already have the optimized software. FABRIC is helping prepare this workflow.”

An AI Internet That’s Also Secure

Some other research areas on which various FABRIC collaborators are focusing, says Nikolich, are cybersecurity, censorship evasion and artificial intelligence, commonly known as AI.

Censorship Evasion

For example, she says, an experimenter at Clemson is exploring how to make sure that the future internet is censorship resistant so there is free and open communication. It’s an important societal issue, she notes, as we’ve recently seen entire countries disconnected from the internet. Networking is crucial to providing barriers to censorship.

AI Innovations

The advanced chip features in the FABRIC nodes have led to some AI experimentation and more experiments are anticipated in the next few months. These include in-network processing and adversarial AI.

In-network processing, explains Nikolich, is just what it sounds like. In traditional networking, the packets — small amounts of data sent over a network — are reassembled into a single file or another contiguous block of data at the receiving end.

With in-network processing, the software processes the data as it’s being transmitted across the network, stripping things out or doing things to add value or do analysis or do machine learning. Nikolich says physicists at CERN are experimenting with in-networking processing, transmitting data from CERN to Chicago and to NCSA. “It’s just more efficient,” she notes.

“For scientists, it’s huge,” says Nikolich. “They don’t have to ship a ton of data and wait. They can do it along the path.”

With two AI institutes at Illinois, Nikolich hopes to do more AI-focused experiments to show the power of in-network processing for AI.

Adversarial AI

Encouraging people to secure the next internet by thinking ahead is also part of the FABRIC process, say, Nikolich and Wheeler. Even though it’s open science, how do you protect it? What can an adversary do? How do we protect against that?

This is how to make the future internet more secure, they say. By thinking like an adversary now, not in 30 years.

The FABRIC Testbed

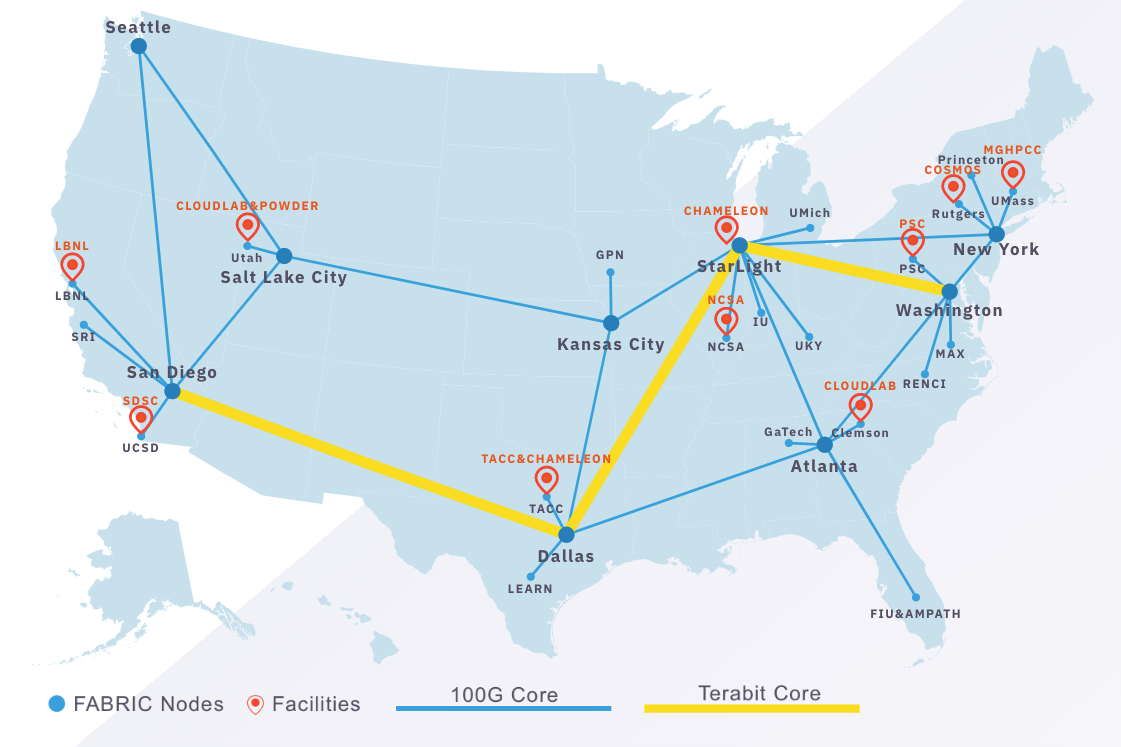

FABRIC consists of storage, computational and network hardware nodes connected by dedicated high-speed optical links. All major aspects of the infrastructure are programmable so researchers can create new configurations or tailor the platform for specific research purposes, such as cybersecurity.

The core nodes are deeply programmable, meaning they are controlled by the network owners and can be programmed to suit their needs. This flexibility and control over the network functionality at all points in the network allows experimenters to test new architectures not possible today.

The FABRIC network is also extensible, meaning it’s designed to allow the addition of new capabilities, facilities and functionality like cloud, networking, other testbeds, computing facilities and scientific instruments.

Sounds like the internet of the future.